AI Safety IV: Sparks of Misalignment

This is the last, fourth post in our series...

Facial identification and verification for consumer and security applications.

Activity recognition and threat detection across camera views.

Spatial computing, gesture recognition, and gaze estimation for headsets.

Millions of identities and clothing options to train best-in-class models.

Simulate driver and occupant behavior captured with multi-modal cameras.

Simulate edge cases and rare events to ensure the robust performance of autonomous vehicles.

Together, we’re building the future of computer vision & machine learning

Facial identification and verification for consumer and security applications.

Activity recognition and threat detection across camera views.

Spatial computing, gesture recognition, and gaze estimation for headsets.

Millions of identities and clothing options to train best-in-class models.

Simulate driver and occupant behavior captured with multi-modal cameras.

Simulate edge cases and rare events to ensure the robust performance of autonomous vehicles.

Together, we’re building the future of computer vision & machine learning

Facial identification and verification for consumer and security applications.

Activity recognition and threat detection across camera views.

Spatial computing, gesture recognition, and gaze estimation for headsets.

Millions of identities and clothing options to train best-in-class models.

Simulate driver and occupant behavior captured with multi-modal cameras.

Simulate edge cases and rare events to ensure the robust performance of autonomous vehicles.

Together, we’re building the future of computer vision & machine learning

Facial identification and verification for consumer and security applications.

Activity recognition and threat detection across camera views.

Spatial computing, gesture recognition, and gaze estimation for headsets.

Millions of identities and clothing options to train best-in-class models.

Simulate driver and occupant behavior captured with multi-modal cameras.

Simulate edge cases and rare events to ensure the robust performance of autonomous vehicles.

Together, we’re building the future of computer vision & machine learning

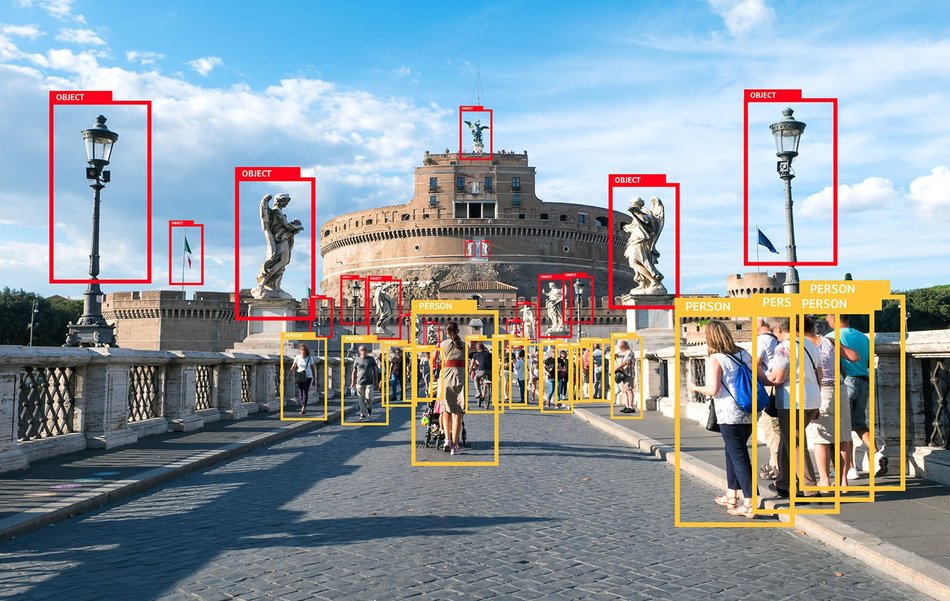

Today, we begin a new mini-series that marks a slight change in the direction of the series. Previously, we have talked about the history of synthetic data (one, two, three, four) and reviewed a recent paper on synthetic data. This time, we begin a series devoted to a specific machine learning problem that is often supplemented by the use of synthetic data: object detection. In this first post of the series, we will discuss what the problem is and where the data for object detection comes from and how you can get your network to detect bounding boxes like below (image source).

If you have had any experience at all with computer vision, or have heard one of many introductory talks about the wonders of modern deep learning, you probably know about the image classification problem: how do you tell cats and dogs apart? Like this (image source):

Even though this is just binary classification (a question with yes/no answers), this is already a very complex problem. Real world images “live” in a very high-dimensional space, on the order of millions of features: for example, mathematically a one-megapixel color photo is a vector of more than three million numbers! Therefore, the main focus of image classification lies not in the actual learning of a decision surface (that separates classes) but in feature extraction: how do we project this huge space onto something more manageable where a separating surface can be relatively simple?

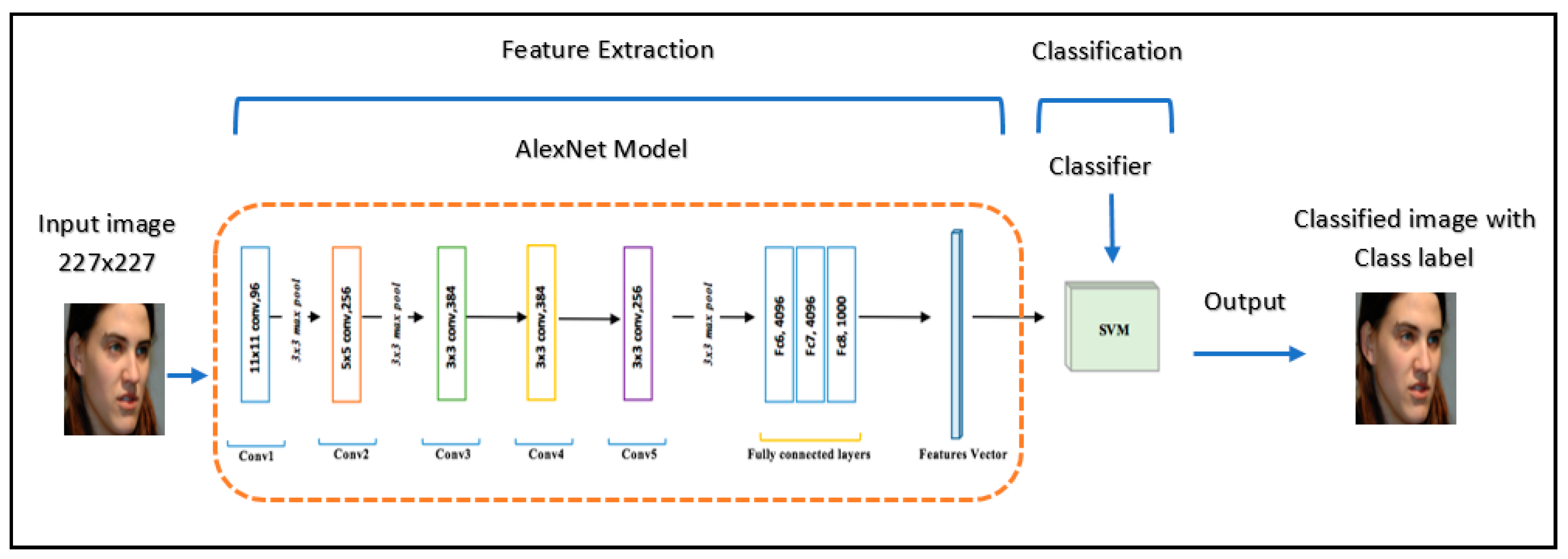

This is exactly the reason why deep learning has taken off so well: it does not rely on handcrafted features like SIFT that people had used for computer vision before but rather learns its own features from scratch. The classifiers themselves are still really simple and classical: almost all deep neural networks for classification end with a softmax layer, i.e., basically logistic regression. The trick is how to transform the space of images to a representation where logistic regression is enough, and that’s exactly where the rest of the network comes in. If you look at some earlier works you can find examples where people learn to extract features with deep neural networks and then apply other classifiers, such as SVMs (image source):

But by now this is a rarity: once we have enough data to train state of the art feature extractors, it’s much easier and quite sufficient to have a simple logistic regression at the end. And there are plenty of feature extractors for images that people have developed over the last decade: AlexNet, VGG, Inception, ResNet, DenseNet, EfficientNet…

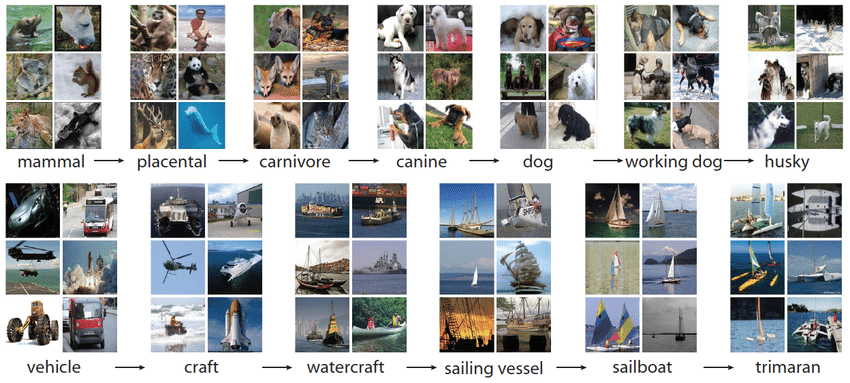

It would take much more than a blog post to explain them all, but the common thread is that you have a feature extraction backbone followed by a simple classification layer, and you train the whole thing end to end on a large image classification dataset, usually ImageNet, a huge manually labeled and curated dataset with more than 14 million images labeled with nearly 22000 classes that are organized in a semantic hierarchy (image source):

Once you are done, the network has learned to extract informative, useful features for real world photographic images, so even if your classes do not come from ImageNet it’s usually a matter of fine-tuning to adapt to this new information. You still need new data, of course, but usually not on the order of millions of images. Unless, of course, it’s a completely novel domain of images, such as X-rays or microscopy, where ImageNet won’t help as much. But we won’t go there today.

But vision doesn’t quite work that way. When I look around, I don’t just see a single label in my mind. I distinguish a lot of different objects within my field of view: right now I’m seeing a keyboard, my own hands, a monitor, a coffee cup, a web camera and so on, and so forth, all basically at the same time (let’s not split hairs over the saccadic nature of human vision right now: I would be able to distinguish all of these objects from a single still image just as well).

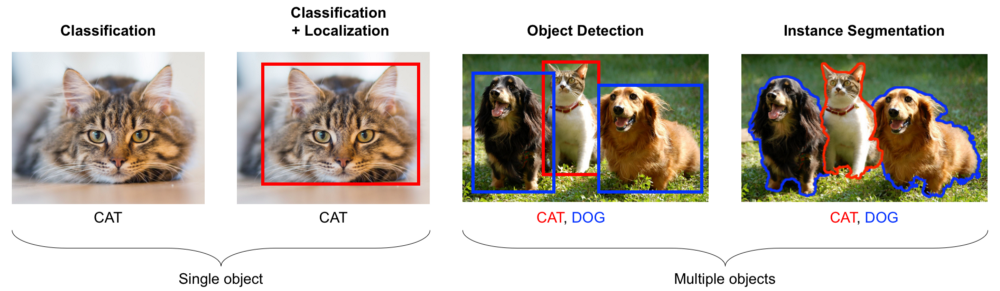

This means that we need to move on from classification, which assigns a single label to the whole image (you can assign several with multilabel classification models, but each of them will still refer to the entire image), to other problems that require more fine-grained analysis of the objects on images. People usually distinguish between several different problems:

As explained with cats and dogs right here (image source):

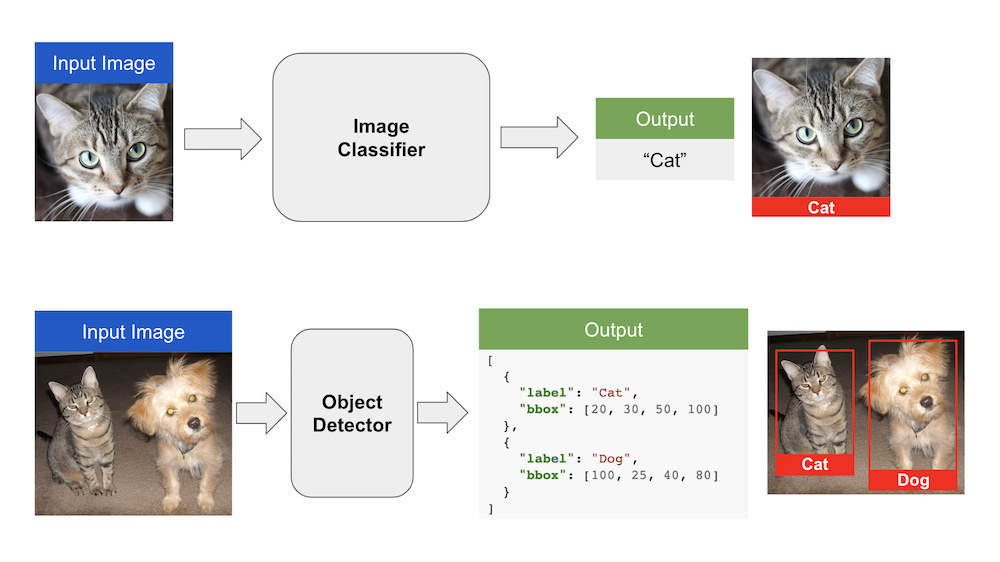

Mathematically, this means that the output of your network is no longer just a class label. It is now several (how many? that’s a very good question that we’ll have to answer somehow) different class labels, each with an associated rectangle. A rectangle is defined by four numbers (coordinates of two opposing corners, or one corner, width and height), so now each output is mathematically four numbers and a class label. Here is the difference (image source):

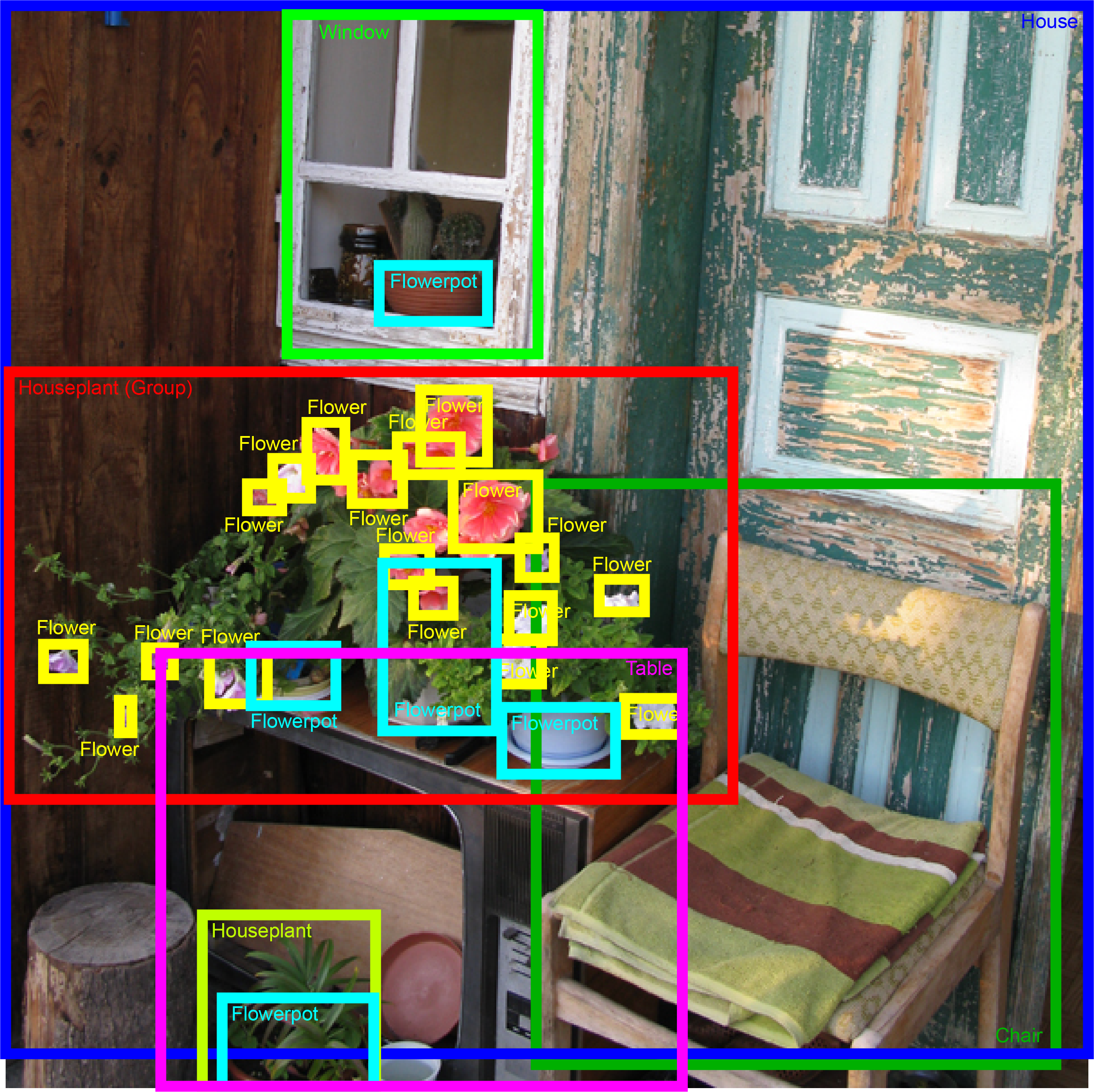

From the machine learning perspective, before we even start thinking about how to solve the problem, we need to find the data. The basic ImageNet dataset will not help: it is a classification dataset, so it has labels like “Cat”, but it does not have bounding boxes! Manual labeling is now a much harder problem: instead of just clicking on the correct class label you have to actually provide a bounding box for every object, and there may be many objects on a single photo. Here is a sample annotation for a generic object detection problem (image source):

You can imagine that annotating a single image by hand for object detection is a matter of whole minutes rather than seconds as it was for classification. So where can large datasets like this come from? Let’s find out.

Let’s first see what kind of object detection datasets we have with real objects and human annotators. To begin with, let’s quickly go over the most popular datasets, so popular that they are listed on the TensorFlow dataset page and have been used in thousands of projects.

The ImageNet dataset gained popularity as a key part of the ImageNet Large Scale Visual Recognition Challenges (ILSVRC), a series of competitions held from 2010 to 2017. The ILSVRC series saw some of the most interesting advances in convolutional neural networks: AlexNet, VGG, GoogLeNet, ResNet, and other famous architectures all debuted there.

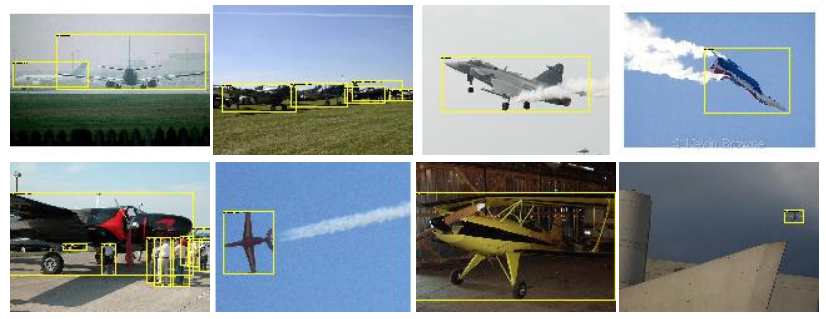

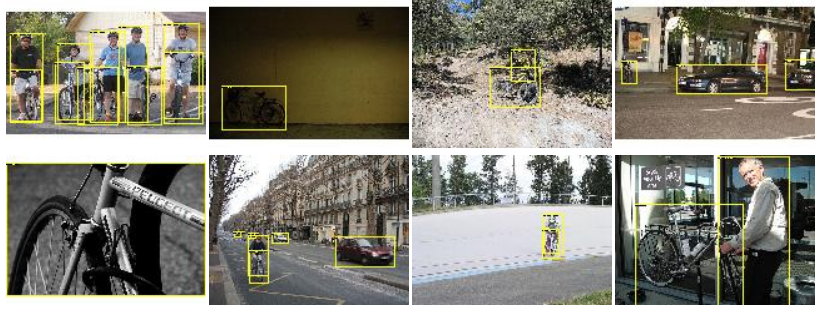

A lesser known fact is that ILSVRC always had an object detection competition as well, and the ILSVRC series actually grew out of a collaborative effort with another famous competition, the PASCAL Visual Object Classes (VOC) Challenge held from 2005 to 2012. These challenges also featured object detection from the very beginning, and this is where the first famous dataset comes from, usually known as the PASCAL VOC dataset. Here are some sample images for the “aeroplanes” and “bicycle” categories (source):

By today’s standards, PASCAL VOC is rather small: 20 classes and only 11530 images with 27450 object annotations, which means that PASCAL VOC has less than 2.5 objects per image. The objects are usually quite large and prominent on the photos, so PASCAL VOC is an “easy” dataset. Still, for a long time it was one of the largest manually annotated object detection datasets and was used by default in hundreds of papers on object detection.

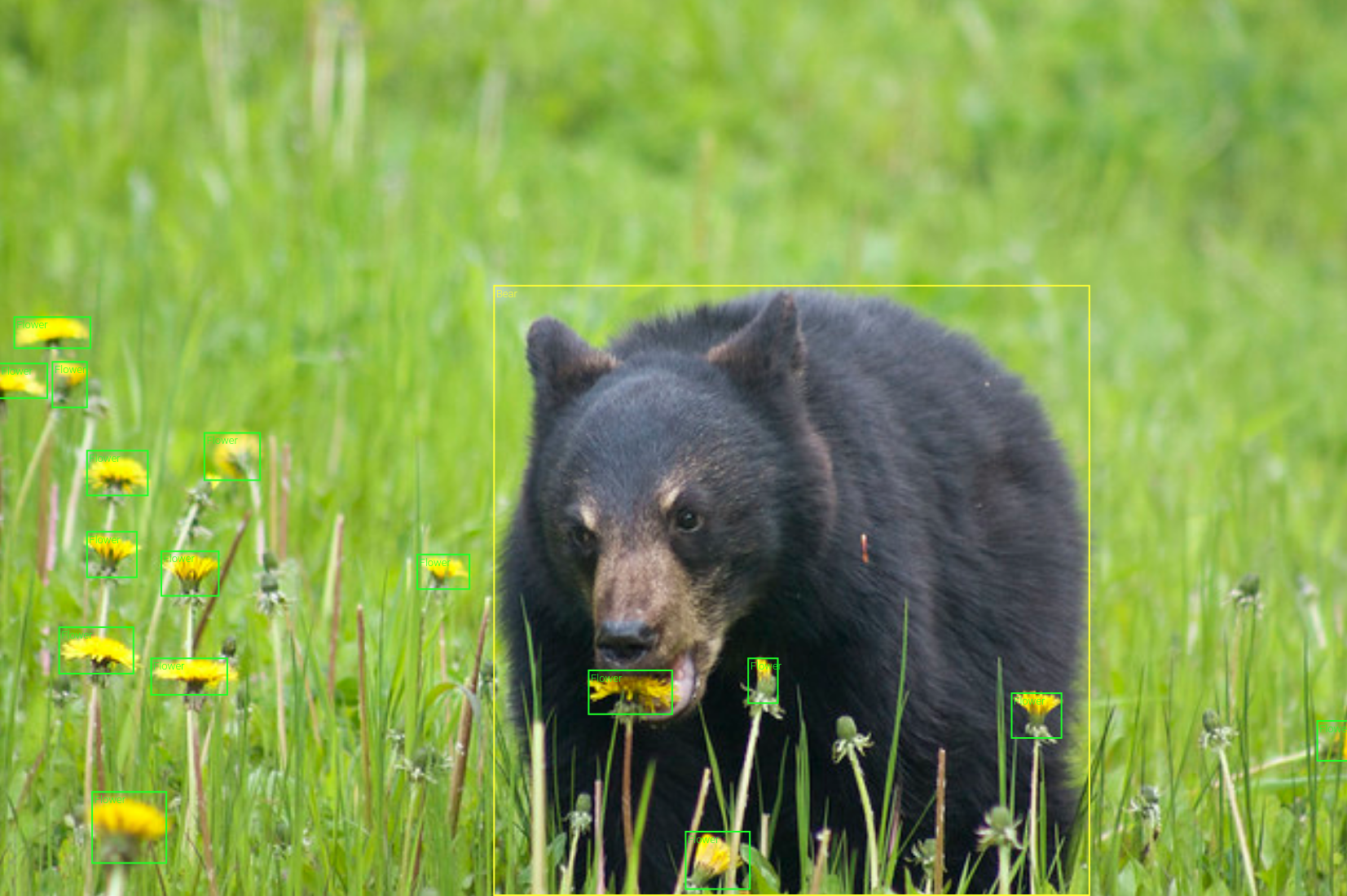

The next step up in both scale and complexity was the Microsoft Common Objects in Context (Microsoft COCO) dataset. By now, it has more than 200K labeled images with 1.5 million object instances, and it provides not only bounding boxes but also (rather crude) outlines for segmentation. Here are a couple of sample images:

As you can see, the objects are now more diverse, and they can have very different sizes. This is actually a big issue for object detection: it’s hard to make a single network detect both large and small objects well, and this is the major reason why MS COCO proved to be a much harder dataset than PASCAL VOC. The dataset is still very relevant, with competitions in object detection, instance segmentation, and other tracks held every year.

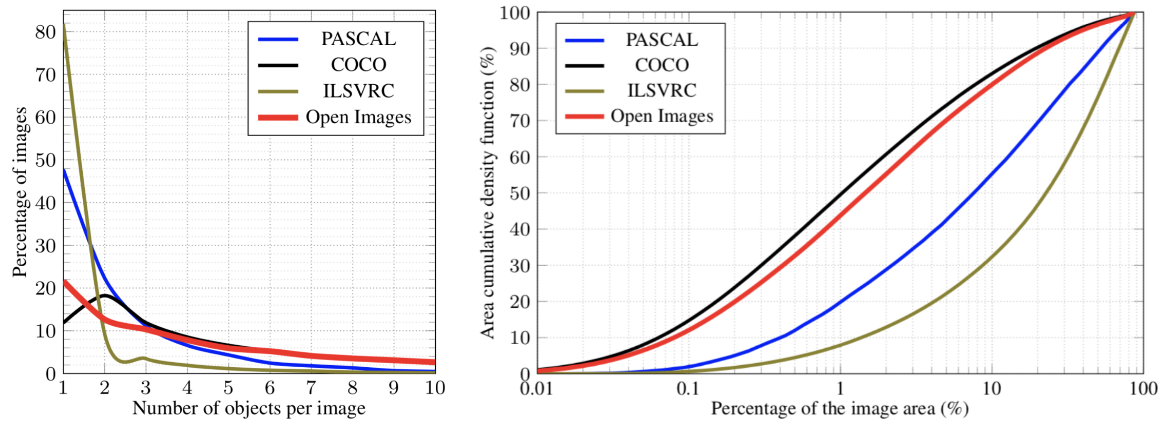

The last general-purpose object detection dataset that I want to talk about is by far the largest available: Google’s Open Images Dataset. By now, they are at Open Images V6, and it has about 1.9 million images with 16 million bounding boxes for 600 object classes. This amounts to about 8.4 bounding boxes per image, so the scenes are quite complex, and the number of objects is also more evenly distributed:

Examples look interesting, diverse, and sometimes very complicated:

Actually, Open Images was made possible by advances in object detection itself. As we discussed above, it is extremely time-consuming to draw bounding boxes by hand. Fortunately, at some point existing object detectors became so good that we could delegate the bounding boxes to machine learning models and use humans only to verify the results. That is, you can set the model to a relatively low sensitivity threshold, so that you won’t miss anything important, but the result will probably have a lot of false positives. Then you ask a human annotator to confirm the correct bounding boxes and reject false positives.

As far as I know, this paradigm shift occurred in object detection around 2016, after a paper by Papadopoulos et al. It is much more manageable, and this is how Open Images became possible, but it is still a lot of work for human annotators, so only giants like Google can afford to put out an object detection dataset on this scale.

There are, of course, many more object detection datasets, usually for more specialized applications: these three are the primary datasets that cover general-purpose object detection. But wait, this is a blog about synthetic data, and we haven’t yet said a word about it! Let’s fix that.

With a dataset like Open Images, the main question becomes: why do we need synthetic data for object detection at all? It looks like Open Images is almost as large as ImageNet, and we haven’t heard much about synthetic data for image classification.

For object detection, the answer lies in the details and specific use cases. Yes, Open Images is large, but it does not cover everything that you may need. A case in point: suppose you are building a computer vision system for a self-driving car. Sure, Open Images has the category “Car”, but you need much, much more details: different types of cars in different traffic situations, streetlights, various types of pedestrians, traffic signs and so on and so forth. If all you needed was an image classification problem, you would create your own dataset for the new classes with a few thousand images per class, label it manually for a few hundred dollars, and fine-tune the network for new classes. In object detection and especially segmentation, it doesn’t work quite as easily.

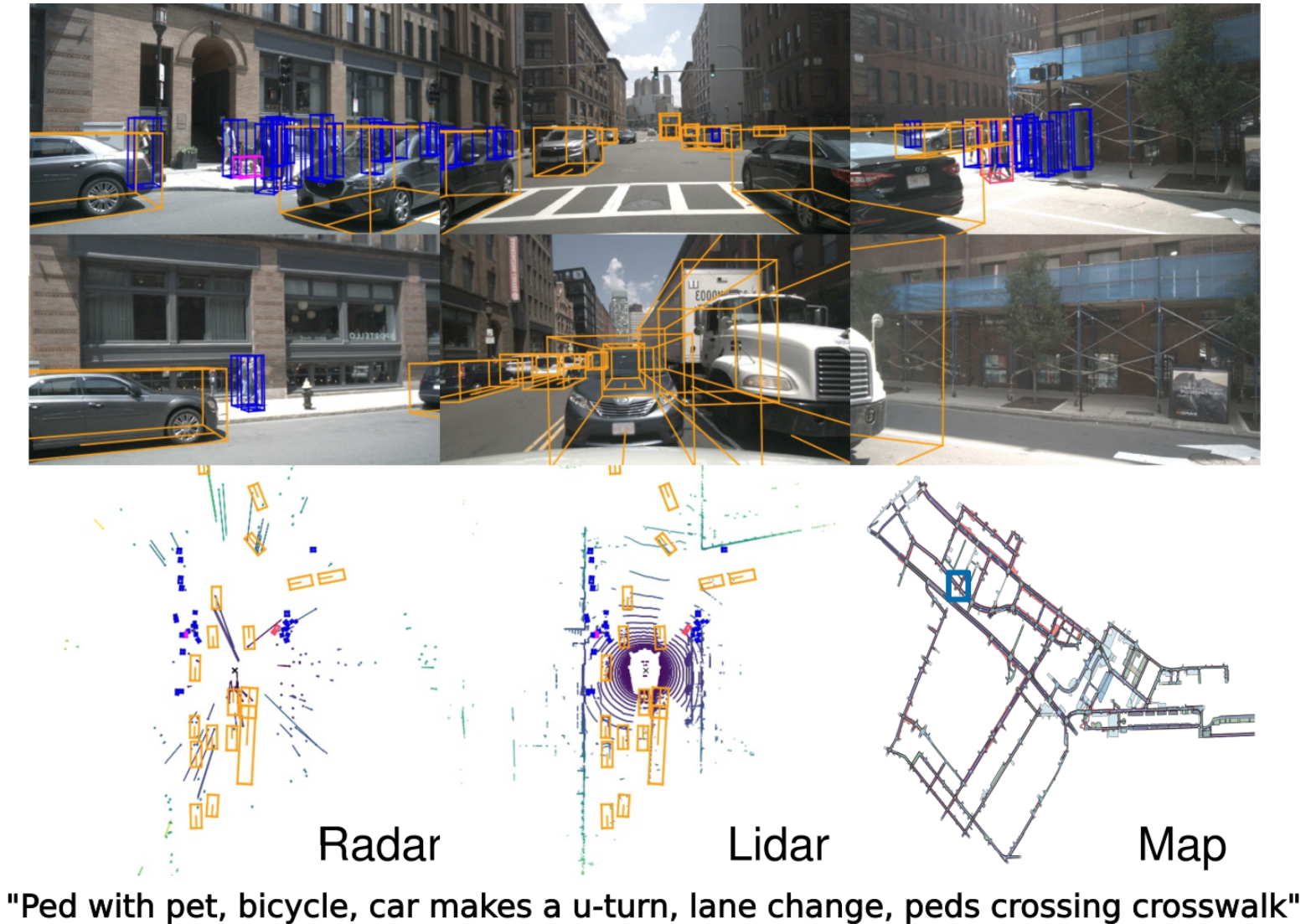

Consider one of the latest and largest real datasets for autonomous driving: nuScenes by Caesar et al.; the paper, by the way, has been accepted for CVPR 2020. They create a full-scale dataset with 6 cameras, 5 radars, and a lidar, fully annotated with 3D bounding boxes (a new standard as we move towards 3D scene understanding) and human scene descriptions. Here is a sample of the data:

And all this is done in video! So what’s the catch? Well, the nuScenes dataset contains 1000 scenes, each 20 seconds long with keyframes sampled at 2Hz, so about 40000 annotated images in total in groups of 40 that are very similar (come from the same scene). Labeling this kind of data was already a big and expensive undertaking.

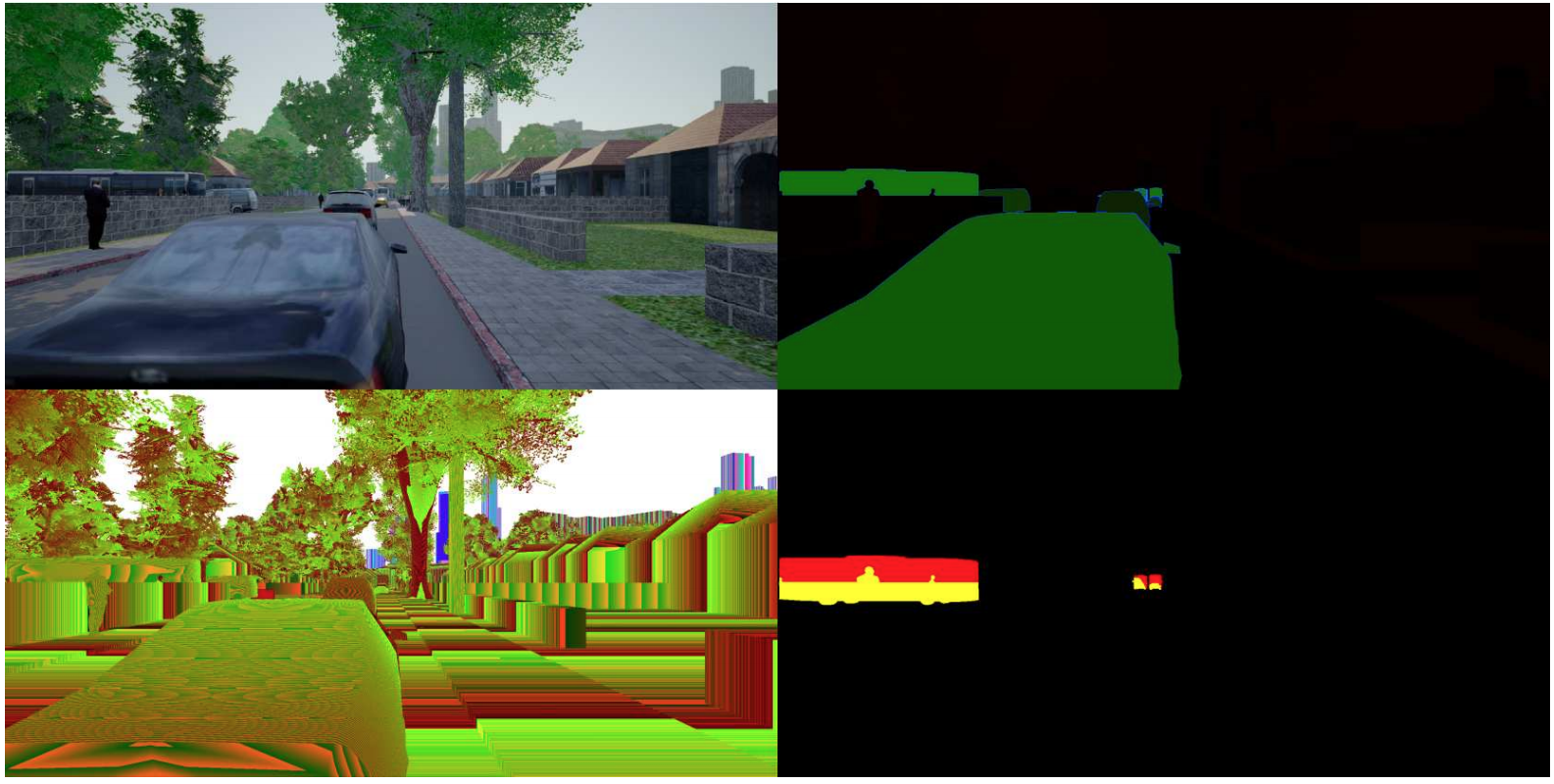

Compare this with a synthetic dataset for autonomous driving called ProcSy. It features pixel-perfect segmentation (with synthetic data, there is no difference, you can ask for segmentation as easily as for bounding boxes) and depth maps for urban scenes with traffic constructed with the CityEngine by Esri and then rendered with Unreal Engine 4. It looks something like this (with segmentation, depth, and occlusion maps):

In the paper, Khan et al. concentrate on comparing the performance of different segmentation models under inclement weather conditions and other factors that may complicate the problem. For this purpose, they only needed a small data sample of 11000 frames, and that’s what you can download from the website above (the compressed archives already take up to 30Gb, by the way). They report that this dataset was randomly sampled from 1.35 million available road scenes. But the most important part is that the dataset was generated procedurally, so in fact it is a potentially infinite stream of data where you can vary the maps, types of traffic, weather conditions, and more.

This is the main draw of synthetic data: once you have made a single upfront investment into creating (or, better to say, finding and adapting) 3D models of your objects of interest, you are all set to have as much data as you can handle. And if you make an additional investment, you can even move on to full-scale interactive 3D worlds, but this is, again, a story for another day.

Today, we have discussed the basics of object detection. We have seen what kind of object detection datasets exist and how synthetic data can help with problems where humans have a really hard time labeling millions of images. Note that we haven’t said a single word about how to do object detection: we will come to this in later installments, and in the next one will review several interesting synthetic object detection datasets. Stay tuned!

Sergey Nikolenko

Head of AI, Synthesis AI